You just spent two hours having a brilliant whiteboard session, but the creative momentum is about to die because someone has to spend the next six hours manually drawing rectangles and configuring auto-layout in Figma. The reality of modern product design is that rapid prototyping in UX usually isn’t rapid at all, it’s pixel-pushing disguised as progress.

If your team still translates intent into wireframes screen by screen, your design stack is slowing validation instead of accelerating it. Stakeholders want high-fidelity immediately. Engineers want production-ready structure. Designers want to test flows, not assemble frames.

The shift happening right now isn’t about faster mockups. It’s about removing the translation layer between idea and testable interface entirely.

The Evolution of Rapid Prototyping in UX Design

Rapid prototyping used to mean sketching fast so you could test early. Today it means generating testable, high-fidelity flows before lunch.

The original reason low-fidelity wireframes existed was simple: high-fidelity design was expensive. Designers needed a cheap way to explore structure before committing to UI assembly. That constraint no longer exists.

When AI can generate brand-aligned screens instantly, starting with gray boxes isn’t discipline. It’s friction.

Stakeholders don’t interpret wireframes the way designers think they do. They fixate on missing color instead of evaluating navigation logic. That forces designers to defend fidelity instead of validating interaction models.

High-fidelity prototypes now produce better usability testing signals because users react to something that feels real. The cost of iteration dropped. The value of realism increased.

Most teams still treat wireframing as mandatory. That assumption is outdated.

If your workflow still starts with boxes, arrows, and placeholder typography, you’re solving yesterday’s bottleneck.

Instead, modern rapid prototyping in UX begins with intent mapping.

Intent mapping replaces layout specification with structured constraints:

- starting user context

- system limitations

- desired outcomes

- business logic

- component rules

This is the shift from drawing interfaces to generating systems.

And it connects directly to how designers already use AI in production workflows not as inspiration, but as structure generation. (See how teams apply this in real projects in How Designers Actually Use AI in Real Projects.)

How AI Tools Compress the 5-Day Design Sprint

The five-day Google Ventures design sprint used to feel fast. Today it’s mostly scheduling overhead.

The real bottleneck wasn’t mapping ideas. It was building prototypes.

AI removes that bottleneck.

From Intent Mapping to High-Fidelity in 48 Hours

Traditional sprint pacing artificially isolates prototyping to Thursday. That forces teams to rush assembly and test weak artifacts.

AI-native workflows collapse the timeline.

Day 1: Intent mapping replaces wireframing

Instead of drawing structure manually, teams define:

- user starting context

- constraints -success states

- required component behaviors

- navigation transitions

These become structured prompts that function like technical briefs.

Where teams fail: writing vague prompts like “design a modern fintech dashboard.” Generic prompts generate generic interfaces.

Day 2: Flow generation replaces frame assembly

Instead of linking auto-layout frames manually, AI tools generate entire multi-screen flows simultaneously.

That means:

- tokens stay consistent

- spacing stays locked

- navigation logic persists

- component states remain synchronized

The difference is momentum. Designers stay in strategy mode instead of assembly mode.

If you’ve ever hit blank-canvas paralysis at this stage, that’s not a creativity problem it’s a tooling problem. This is exactly the gap described in Blank Canvas Syndrome.

Validation happens immediately

Because generated prototypes are interactive, usability testing starts earlier.

Engineering translation disappears when prototypes export structured components directly.

Compare timelines:

| Phase | Traditional Timeline | AI Workflow |

|---|---|---|

| Discovery | 1–3 weeks | ~1 week |

| Wireframing | 2–4 weeks | hours–days |

| UI design | 1–2 weeks | days–1 week |

| Interactive prototyping | 1–5 weeks | days–1 week |

| Total | 8–20+ weeks | 4–8 weeks |

This isn’t incremental acceleration. It cuts the lifecycle nearly in half.

The Dangers of “Vibe Coding” and Design System Drift

There’s a growing belief that designers should skip design tools entirely and generate apps directly with AI coding assistants.

That shortcut creates UX debt faster than it creates prototypes.

“Vibe coding” produces statistically plausible interfaces, not structurally correct ones.

Common failure patterns include:

- broken accessibility logic

- inconsistent spacing tokens

- unpredictable component states

- navigation hierarchy mismatches

- off-brand typography systems

Developers inherit cleanup work. Designers inherit repair work.

And the organization inherits long-term maintenance debt.

The deeper problem is design system drift.

When AI generates screens without token enforcement, every new screen becomes a deviation risk. Typography scales shift. color palettes mutate. spacing logic fragments.

This is why production teams increasingly treat prompts as technical specifications instead of creative suggestions.

Accessibility suffers too unless constraints are explicit. If you’re not embedding requirements intentionally, you’re trusting probability instead of structure. That’s exactly why structured prompting matters for compliance workflows like those covered in Prompting for Accessibility.

Rapid prototyping should accelerate validation not introduce cleanup work.

Practical Scenarios for AI-Driven Rapid Prototyping

Theoretical speed improvements don’t convince product teams. Workflow transformations do.

Here’s what rapid prototyping in UX actually looks like under pressure.

Optimizing B2B SaaS Onboarding Flows

A fintech platform sees a 60% drop-off rate on a 20-field activation form.

The traditional fix:

- map five progressive disclosure steps

- rebuild component states

- wire interactions manually

- assemble prototype screens

- validate flow

Timeline: three days minimum.

The AI workflow:

- ingest 20 existing fields

- define disclosure structure

- enforce trust signals

- generate five screens simultaneously

- preserve component states automatically

Timeline: under two minutes.

Generic generators fail here because they ignore compliance cues and trust-signal color logic typical in fintech interfaces.

Flow-aware generators succeed because they maintain system constraints across screens.

Executing Rapid Navigation Pivots

Thursday afternoon. Executives want to replace top navigation with a collapsible sidebar before Friday review.

Traditional response:

- detach navigation components

- rebuild layout constraints

- resize frames manually

- relink flows

- introduce hidden debt everywhere

AI response:

- parse navigation taxonomy

- restructure hierarchy automatically

- regenerate constraints across screens

- update layouts simultaneously

The difference isn’t speed alone. It’s structural consistency.

The 48-Hour Clinical Dashboard Sprint

A pharmaceutical team needs a working PK/PD simulation dashboard prototype before a stakeholder review.

Engineering is unavailable.

Traditional outcome:

static mockups that fail to demonstrate multi-dose simulation logic.

AI-driven outcome:

- generate visualization architecture

- structure simulation flows

- export interactive components

- demonstrate exposure distribution behavior

Timeline: two days.

That’s the difference between showing screens and showing systems.

Why Single-Screen AI Generators Fail at UX Logic

Most AI UI tools generate screenshots. Software requires flows.

Single-screen generators suffer from context window collapse. They forget the design system after the first screen.

Symptoms include:

- typography scale shifts between steps

- color token drift

- navigation inconsistencies

- layout constraint mismatches

Designing products means designing transitions.

If your tool can’t maintain token consistency across a checkout sequence, onboarding funnel, or dashboard workflow, it’s producing inspiration not prototypes.

This is the same reason prompt libraries matter. Structure determines output reliability. Teams already using repeatable prompt patterns move dramatically faster than those improvising each generation session. See examples in Real Prompts We Use.

Rapid prototyping in UX requires flow-level generation, not screen-level styling.

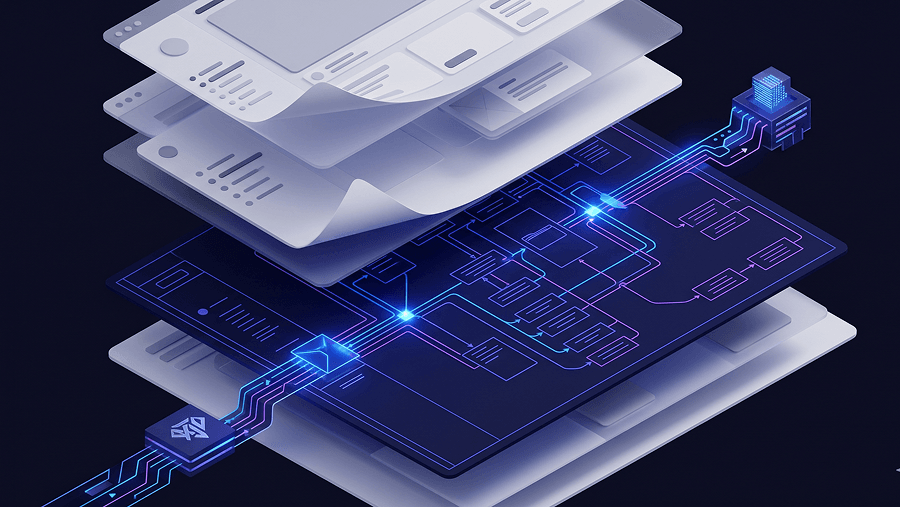

Designing Systems with UXMagic Flow Mode

Most generators design frames. UXMagic designs flows.

Flow Mode generates multi-step journeys while locking navigation structure, spacing tokens, and component rules across screens. Instead of verifying each screen manually, designers review logic across the entire sequence.

That eliminates the single-screen hallucination problem completely.

It also solves the second major failure point: design system drift.

Instead of generating statistically likely styling, UXMagic enforces existing design tokens directly inside the generation process. Typography, spacing variables, and color systems remain synchronized from prompt to export.

This matters during developer handoff.

When prototypes export production-ready UI aligned with token libraries, engineers don’t rebuild interfaces from scratch. The translation tax disappears.

Unlike code-first generators that remove visual control, Flow Mode keeps designers inside a spatial reasoning environment while preserving code parity underneath.

That’s the difference between generating interfaces and generating architecture.

If you’re structuring workflows intentionally instead of experimenting randomly, this approach aligns closely with the human-in-the-loop methodology described in Human in the Loop AI Design.

Quick Takeaways

- Stop starting with gray-box wireframes when high-fidelity prototypes can be generated instantly.

- Replace five-day sprint assembly bottlenecks with intent-mapping workflows.

- Demand flow-level generation instead of isolated screen outputs.

- Treat prompts as structured technical briefs, not creative suggestions.

- Export production-ready code to eliminate developer handoff translation loops.

Prediction: Within 12 months, teams that still prototype screen-by-screen instead of flow-by-flow will feel as slow as teams that still export PNGs for developer handoff today.

Rapid prototyping in UX is no longer about drawing faster it’s about validating flows earlier. Teams that move from screen-by-screen assembly to intent-driven flow generation remove the biggest bottleneck in product design: translation. The advantage now goes to teams that prototype systems, not screens.

Generate Your First Multi-Screen Flow in Minutes

Stop rebuilding layouts screen by screen. Try UXMagic free and generate a production-ready user flow directly from intent without breaking your design system.